Name Issue with the Manual Deletion and Recreation of a Service in ServiceFabric

A service in Service Fabric can be restarted by deleting and recreating an instance in Service Fabric Explorer. However, it is important to note that not all service templates names are identical to the running services in VidiFlow.

Solution: Please double-check the name when recreating the service. Ideally, copy the service name from the deletion confirmation dialog so that it can be pasted when being recreated.

Deleting a Service from Service Fabric Longer Than Expected

Deleting a service from inside Service Fabric will result in that service no longer being displayed in Service Fabric. However, this service may still be running. Recreating the service in Service Fabric Explorer during this period could mean that the existing service is reused.

Solution: Ensure the service is actually deleted by checking the Task Manager in the host machine.

Workflows Cancelled in VidiFlow Monitoring Remain in Camunda Queue

Solution: Use this script in PowerShell to delete workflows that are canceled from Camunda. Replace the workflow ID where required:

|

PowerShell Script |

|

clear $workflowinstances = Invoke-RestMethod -Uri http://KUBERNETESENDPOINT:31080/engine-rest/process-instance/?processDefinitionId=WF_FileIngest:29:262dac29-1366-11e9-8326-fa5a5ad76dd7

"Startcount: " + $workflowinstances.Count

foreach($instance in $workflowinstances) { "Deleting instance " + $instance.id

$result1 = Invoke-RestMethod -Uri ("http://KUBERNETESENDPOINT:31080/engine-rest/process-instance/" + $instance.id + "/modification") -Method Post -ContentType "application/json" -Body '{"skipCustomListeners": true,"skipIoMappings": true,"instructions": [{"type": "cancel","activityId": "Task_0a7mr03"}]}' $result1 $result = Invoke-RestMethod -Uri ("http://KUBERNETESENDPOINT:31080/engine-rest/process-instance/" + $instance.id) -Method Delete $result }

$workflowinstances = Invoke-RestMethod -Uri http://KUBERNETESENDPOINT:31080/engine-rest/process-instance/?processDefinitionId=WF_FileIngest:29:262dac29-1366-11e9-8326-fa5a5ad76dd7 "Endcount " + $workflowinstances.Count

|

ConfigPortal WebAPI is Slow and Services are Displayed Incorrectly on Service Fabric

There are two things to check when services fail to start, or WebAPI with ConfigPortal and Swagger are extremely slow to react:

-

Garbage Collection in the Git Repository: to improve performance, run the following script from the machine where the GitRepo is located in Cmd.exe (git must be installed):

|

Cmd.exe Script |

|

git -C \\PATH\TO\GITREPO\ gc |

2. Empty the CachedData table in ConfigPortal’s Cache DB: truncate table CachedData

3. Restart WebAPI and Notification services of CP afterwards

CreateItem Vidispine Requires a Long Time

The default timeout until Vidispine registers a file as closed / not growing is set to 5 minutes. This parameter is called closeLimit in Vidispine. The parameter can be modified by sending a PUT to {{k8surl}}:{{vidispinePort}}/API/storage/STORAGE-VX-{{ID}}/metadata/closeLimit with a plain text body of 1 (for 1 minute). Please ensure that the right storage ID be applied to in the URL.

Changing Service Fabric Configuration on a Running Local Cluster

In the event that one may want to edit configuration details for an existing cluster, such as the number of ports available to clients of the cluster, proceed by taking the following steps:

-

Duplicate the cluster configuration json, and give it a distinct name.

-

Modify the cluster json, and give it a new version number at the top of the json file. Example: change 2.0.0 to 2.1.0

-

Execute TestConfiguration.ps1 with two parameters:

-

ClusterConfigFilePath "path to edited json"

-

OldClusterConfigFilePath "path to original json"

4. Execute the following command in PS: Connect-ServiceFabricCluster

5. This should return "True" and a few Connection and Gateway details

6. Then execute Start-ServiceFabricClusterConfigurationUpgrade - ClusterConfigPath "path to edited json".

ConfigPortal WebAPI has Issues Writing to GitRepository

If ConfigPortal web API fails when writing .git file, this may be due to the fact that some file systems (for example Isilon) do not allow the writing of hidden files.

Solution: Check with the system administrator for different user permissions. Alternatively, use a standard SMB share for GitRepository.

VidiFlow Installation Fails with Setup Error MSI 1603

ERROR

ERROR: Execution of deployment step with name: 'Deploy ______ Service' has failed.

ERROR: [________ deployment failed with MSI error code 1603].

Solution: Should the errors occur, check the log file of the services’s installer. By default, the log file is located in VidiFlow installation folder/drop \Deployment\Scripts\logs.

Every Service in Service Fabric Displayed as Red

Solution: The most common point of failure for widespread errors is the Authentication Service which relies on the database. Check if the database is running and reachable with the VidiFlow user. Alternatively, check the Authentication log file for more specific errors.

Service Fabric UIs Remain Empty / Show Only White

Solution: Ensure that the exact full-qualified domain name, specified when setting up Service Fabric, is used. Anything that goes over the reverse proxy port (default 19081) fails when the domain name is not exactly as specified.

Kubernetes Nodes Stuck in "Registering" State

Solution: Ensure that the necessary ports are open in the Linux firewall, necessary for Kubernetes communication to take place.

Staging in ConfigPortal Fails with "System name is more than 1"

For staging, both the system name and its respective GUID must be identical. If the names are set manually in multiple environments, without using staging, this may result in the names having the same string name, but not the same GUID.

Solution: Ideally, one would set the system name through staging automatically. If they are already set, one can use swagger in the first environment to get the system name and GUID and update it in the new environment, or delete it in the target environment and use staging to set it again.

MediaPortal Connector Does not Sync Item to MediaPortal

The MediaPortal Connector will at times filter out items, preventing these from synchronizing to MediaPortal. There are multiple reasons why this may occur:

-

No proxy shape tag on a video item

-

V3_hidden flag set to “true”

-

MediaType/ V3_ExpectedMediaType not “video”

-

Vidispine title medata is empty (this is only valid for release prior to MediaPortal 19.1).

Agents in ServiceFabric Restart When GETting a Use Case Configuration

Agents in ServiceFabric are restarted when GETting a use case configuration. Logfile indicates an “Authentication Issue”.

Please check the VidiFlow Basic Agent for “client_prefix” via Swagger. This could be attributed to a problem in which a 19.1 version is installed, and 19.2 agents are running. Because the SDK used to create workers includes authentication components introduced in 19.2, this might cause an incompatibility issue with a 19.1 build - which uses the default authService Identity configuration.

Solution: The following steps were taken to fix the issue manually:

-

Copy the …\Scripts\Configuration\Identity folder content to the Scripts/Configuration folder of the 19.1 setup.

-

Open the authentication service Swagger page and authorize the Swagger page

-

Execute Client/"Delete /v1/Client" for

-

file_watcher

-

pf_mediaportalnotifier_service

-

pf_mediaportalsendto_service

-

VidispineFileNotifications

-

pf_basic_agent

4. Execute .\Bootstrapper.ps1 …. -singleStepToExecute "Insert Identity Configurations" in PowerShell

Camunda Engine Reports "<var> was found more than once in the input"

When sending HTTP POST to:

|

EXAMPLe |

|

'http://ABC.EFG.HIJK.com:LMNOP/engine-rest/external-task/ffbbe30f-af92-11e9-94d9-8ab94683cec2/failure'. Content: '{"workerId":"platformWorkerId","errorMessage":"Agent taskEvent ffbbe30f-af92-11e9-94d9-8ab94683cec2 failed: The data contract type 'Platform.Vidispine.TranscodeFile.Contracts.TranscodeProxyInternalTask' cannot be deserialized because the data member 'ItemId' was found more than once in the input.","errorDetail":"Agent taskEvent ffbbe30f-af92-11e9-94d9-8ab94683cec2 failed: The data contract type 'Platform.Vidispine.TranscodeFile.Contracts.TranscodeProxyInternalTask' cannot be deserialized because the data member 'ItemId' was found more than once in the input.","retries":0,"retryTimeout":0}'

|

Solution: This may occur if the Camunda workflow engine parses the workflow incorrectly. Try to identify the offending action and remove it in the workflow, add it again, and then test the new workflow version.

RabbitMQ Error While Waiting for Mnesia Tables on Startup

An issue exists in which RabbitMQ logs error while waiting for Mnesia tables. Retries are then performed.

EXAMPLE

"Waiting for Mnesia tables for 30000 ms, x retries left".

Solution

The reason for this issue lies in RabbitMQ having problems synchronizing with other running instances, or previously ran with more instances than now.

The simplest approach to solving this issue is to throw away RabbitMQ queue data and allow it to perform a fresh resync.

-

First, scale down RabbitMQ to 0 instances.

-

Then, on each node in which the local rabbitmq-data folder resides, do a “cd/vpmsdata/rabbitmq-data/data/”.

-

Then, delete the data inside the folder with "rm -rf *".

-

Finally, scale up again and check to see if the error is no longer present in the logs.

RabbitMQ or Other Stateful Services with Local Data do not Recognize the Mount

In some cases when modifying the local mount directory, RabbitMQ or other stateful services with local mountpoints will not start.

Example error message:

"mount failed: exit status 32 Mounting command: mount Mounting arguments: -o bind /vpmsdata/rabbitmq-data/data /var/lib/kubelet/pods/GUID/volumes/kubernetes.io~local-volume/pv-7ttjp Output: mount: mount(2) failed: No such file or directory)

Solution: This mostly happens on clusters set up with Rancher. It may help to restart the kubelet pods on the affected machines to recognize the correct local mount point.

Kubernetes Reports Error "PLEG is not healthy"

PLEG is a very basic health check which runs docker ps in the background on every node and updates a timestamp on completion. If this timestamp is not updated in a set interval (by default 3 mins), then the health check is failing and Kubernetes may not be behaving as expected.

Solution: Try removing unused containers with the following command on every node:

docker container rm $(docker container ls -aq)

The node where the PLEG has failed will likely be stuck in the process of removing containers. If that's the case, a reboot may help, then run the command again afterwards.

Long Directory Filenames Cannot be Deleted in Windows

Even with long filename support enabled in Windows, it is possible that Service Fabric applications create files and folder structures that are too long for Windows to handle.

Solution: A possible solution involving the use of robocopy is described in the SuperUser thread referenced below and works for deleting obscure Service Fabric Logstash folders:

https://superuser.com/a/1048242/403461

VidiFlow Installation Reports Error “settings.xml already exists”

VidiFlow install into ServiceFabric fails with the error "settings.xml for CamundaBroker (or other agent) already exists on the host".

Solution: In PowerShell:

Connect-ServiceFabric

Get-ServiceFabricImageStoreContent => Show ALL Packages in Image Store of SF

Remove-ServiceFabricApplicationPackage –[Package Name] => Remove all Versions of the Package

Then rerun the deployment.

High resource usage on DB "VPMS_ProcessEngine"

The Prometheus plugin in Camunda can cause a high usage of resources. This is due to it continuously performing writes and reads from the RU_METER_LOG table in VPMS_Platform_ProcessEngine database. This may also cause storage usage to grow quickly.

Solution: Check whether old entries are properly cleaned up from the table.

You can disable the reporting (not recommended): Look in configmap of Camunda-Config for the Prometheus plugin and comment it out:

<class>io.digitalstate.camunda.prometheus.PrometheusProcessEnginePlugin</class>

<properties>

<property name="port">9199</property>

<property name="camundaReportingIntervalInSeconds">15</property>

<property name="collectorYmlFilePath">/camunda-prometheus/prometheus-metrics.yml</property>

</properties>

</plugin>

Large Number of Thumbnails: Thumbnail Hierarchy

By default, thumbnails in VS / VidiFlow are stored as folders, one for each item, in a flat directory. However, once a few million assets have been ingested, and depending on your storage solution, this may result in issues.

Solution: Vidispine offers a system property called "thumbnailHierarchy", which when set automatically splits folder IDs into subfolders:

https://apidoc.vidispine.com/latest/system/thumbnails.html#using-a-tree-structure-for-thumbnails

This can be changed to a hierarchy level of 10000. Vidispine has provided a script which moves existing keyframe folders into the same storage structure.

|

Call for Thumbnail Hierarchy Value |

|

PUT /API/configuration/properties

|

|

Python Script for Moving Keyframe Holders to Same Storage |

|

#!/usr/bin/python import os import shutil dirs = [os.path.join(".", o) for o in os.listdir(".") if os.path.isdir(os.path.join(".",o))] for dir in dirs: parts = dir.split("-") if len(parts) != 2: continue new_dir = "temp/" if len(parts[1]) >= 5: new_dir += parts[0] + "-" + parts[1][:-4] + "/" + parts[1][-4:] elif len(parts[1]) == 4: new_dir += parts[0] + "-0/" + parts[1] elif len(parts[1]) == 3: new_dir += parts[0] + "-0/0" + parts[1] elif len(parts[1]) == 2: new_dir += parts[0] + "-0/00" + parts[1] elif len(parts[1]) == 1: new_dir += parts[0] + "-0/000" + parts[1] shutil.move(dir, new_dir) dirs = [os.path.join("./temp", o) for o in os.listdir("./temp") if os.path.isdir(os.path.join("./temp",o))] for dir in dirs: shutil.move(dir, ".") shutil.rmtree("./temp") |

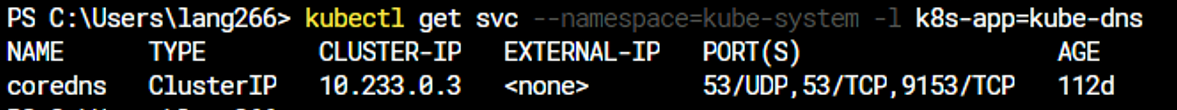

DNS resolution broken after kubespray upgrade

Double-check that the kubelet config has the right IP for the DNS server.

First, query the IP of coredns with this command:

Then check on each of the nodes where kubelet is running in /etc/kubernetes/kubelet-config.yaml that the DNS IP is the same under clusterDNS, if not, change it to the correct IP from above, and restart kubelet. (repeat this on each kubelet node).

Then restart all running pods in the cluster.

.png)